Table of Contents

If you follow SEO closely, you’ve probably noticed how fast the phrase “AI agents” has entered the conversation. Almost overnight, routine SEO automation was rebranded as intelligence. Tools that once generated reports are now described as agents that “analyze,” “decide,” and sometimes even “act.”

From my experience working with tech blogs and content-driven sites, this shift has created more confusion than clarity.

Most people don’t actually know what an AI agent is in practical SEO terms. Worse, many assume that if an agent can run an audit, it can also understand priorities, intent, and risk. That assumption is where problems start.

This article is not about hype. It’s about reality. I want to break down what AI agents for SEO audits actually are, how they work, and where they genuinely add value, before we even talk about risks or advanced use cases.

What Are AI Agents in the Context of SEO Audits?

In simple terms, an AI agent is a system that can perform tasks semi-autonomously by combining data inputs, predefined goals, and decision logic. That’s very different from a traditional SEO tool that just reports metrics.

In SEO auditing, an AI agent typically:

- Pulls data from multiple sources (crawl data, Search Console, analytics)

- Applies rules or learned patterns

- Produces conclusions or recommendations

- Sometimes repeats this process on a schedule

What it does not do is “understand SEO” the way a human does. It doesn’t grasp business priorities, monetization strategy, or long-term brand risk. It operates within boundaries set by its design.

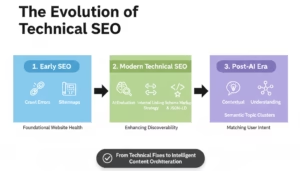

To put this in context:

- Traditional SEO tools show you problems.

- AI-powered features summarize problems.

- AI agents attempt to reason about problems across systems.

That last part is what makes them powerful, and also dangerous if misunderstood.

This evolution sits squarely inside the broader ecosystem of AI Tools for SEO Content and Digital Marketing, where the line between assistance and decision-making is becoming increasingly blurred.

How AI Agents Actually Perform SEO Audits

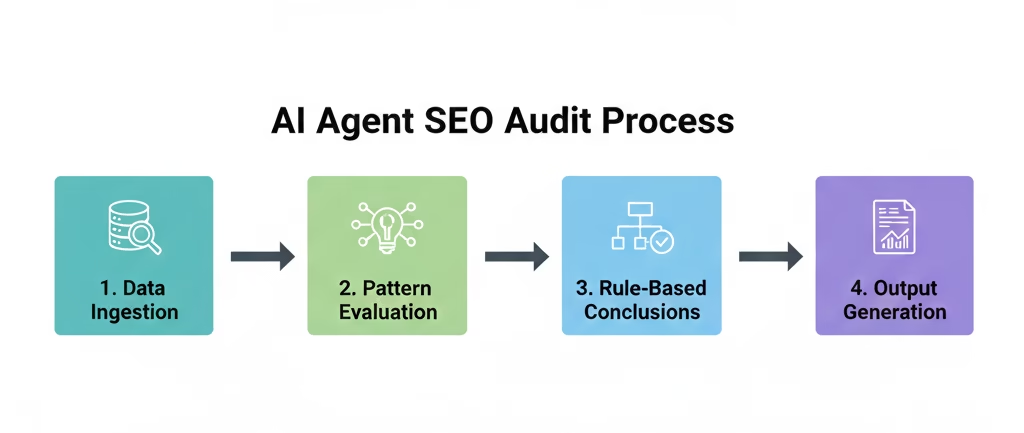

Despite the marketing language, most AI agents today don’t “think.” They operate through structured workflows.

At a high level, an AI agent SEO audit usually follows this process:

- Data ingestion

- Pattern evaluation

- Rule-based or probabilistic conclusions

- Output generation

The intelligence comes from how these steps are chained together.

Data Ingestion and Sources

AI agents typically pull from:

- Crawl data (site structure, URLs, status codes)

- Google Search Console (coverage, performance, queries)

- Analytics data (traffic behavior, engagement)

- Sometimes SERP snapshots or competitor signals

On their own, none of this data is new. What changes is the ability to analyze relationships between datasets automatically, which humans often struggle to do at scale.

This is why AI agents pair well with platforms covered in AI Tools That Turn Google Search Console Data Into Actionable Insights.

Reasoning vs Pattern Matching

This is a critical distinction.

Most AI agents do not reason causally. They detect patterns:

- Pages with low traffic and high crawl frequency

- URLs indexed but never ranking

- Internal links pointing to non-priority pages

These patterns are useful signals, but they are not decisions. The agent can tell you something is unusual. It cannot reliably tell you why without context you provide.

Task Execution vs Decision-Making

Some agents stop at recommendations. Others attempt execution, such as:

- Suggesting internal link changes

- Flagging pages for noindex

- Prioritizing technical fixes

This is where restraint matters. The more autonomy you give an agent, the higher the risk of compounding errors. Most successful implementations I’ve seen treat AI agents as analysts, not executors.

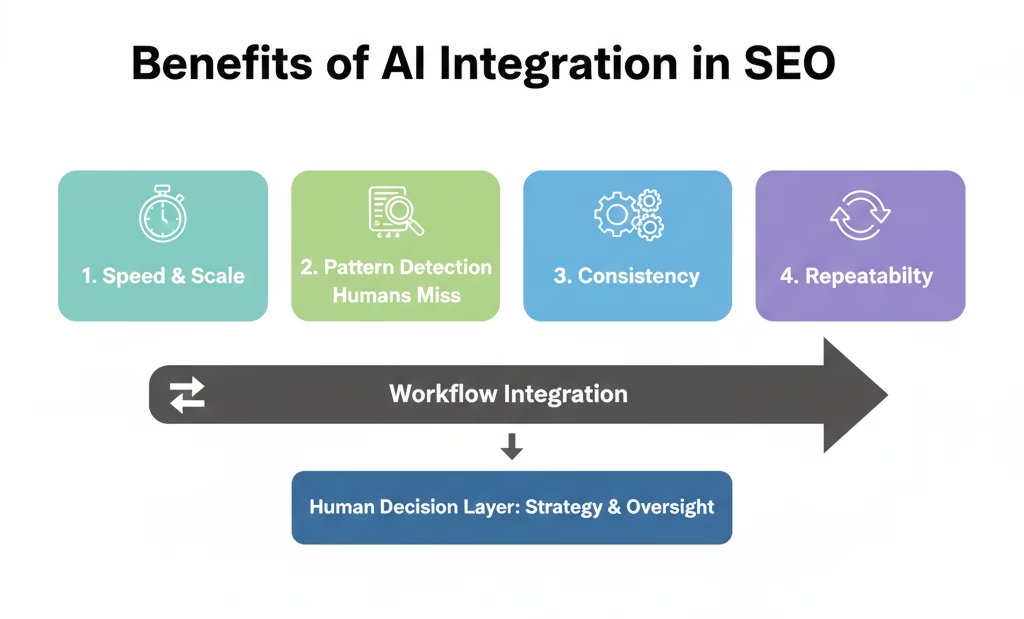

Real Benefits of Using AI Agents for SEO Audits

When used correctly, AI agents can deliver real value. But that value is specific, not universal.

Speed and Scale

This is the most obvious benefit, and it’s legitimate.

AI agents excel at:

- Auditing large sites with tens of thousands of URLs

- Running frequent checks without manual effort

- Detecting changes over time, not just snapshots

What would take a human hours or days can be surfaced in minutes. For enterprise sites or content-heavy blogs, this alone can justify their use.

Pattern Detection Humans Miss

Humans are good at judgment. They’re terrible at spotting subtle correlations across massive datasets.

AI agents can flag:

- Crawl budget inefficiencies

- Internal linking imbalances

- Pages competing for the same intent

- Index bloat patterns that develop slowly

These insights are especially valuable when combined with human review. On their own, they’re signals. With interpretation, they become strategy.

Consistency and Repeatability

Human audits vary depending on who runs them and when. AI agents don’t get tired, distracted, or inconsistent.

A well-configured agent will:

- Apply the same logic every time

- Catch regressions early

- Maintain baseline standards across audits

From experience, this consistency is where AI agents deliver the most long-term value. Not because they’re smarter, but because they’re reliable.

That reliability is the foundation for everything else we’ll discuss next: risks, misuse, and where AI agents should never be trusted blindly.

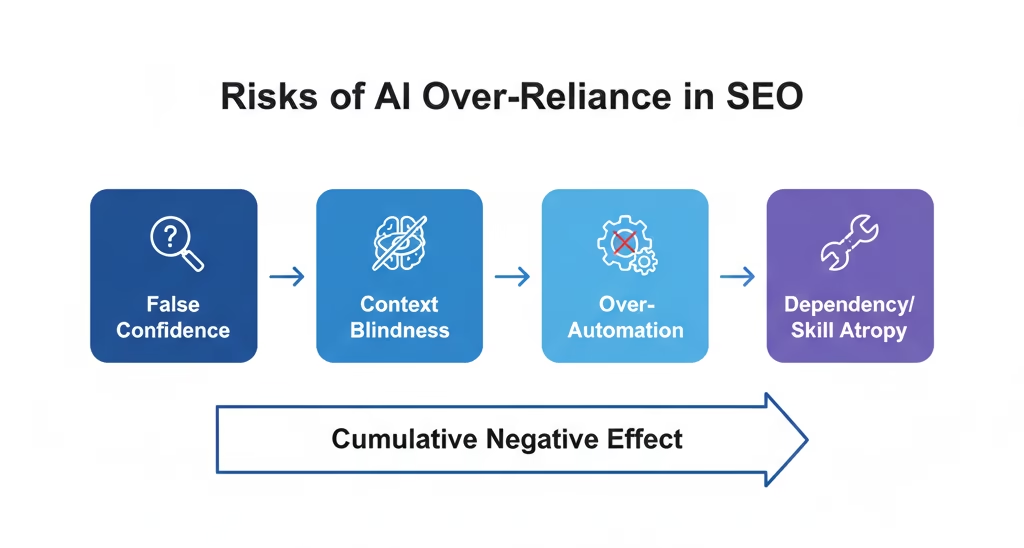

The Hidden Risks Most SEO Blogs Don’t Talk About

AI agents for SEO audits are often presented as neutral, objective, and “smart.” That framing is misleading. The real risk isn’t that AI agents fail loudly. It’s that they fail quietly, while sounding confident.

This is where experienced SEOs get cautious and beginners get burned.

False Confidence From Plausible Output

One of the most dangerous traits of AI systems is how reasonable their output sounds.

SEO is full of gray areas. There are very few universally correct answers. When an AI agent flags something as an “issue” or recommends an action, it often presents that suggestion in clean, authoritative language. The problem is that plausibility is not correctness.

For example, an agent might confidently recommend noindexing hundreds of pages due to “low performance.” On paper, the logic seems sound. In reality, those pages may support internal linking, topical authority, or long-tail discovery. Removing them can quietly collapse rankings weeks later.

This is why “sounds right” is dangerous in SEO. Search performance is not linear. A change that looks correct in isolation can be destructive in context. AI agents don’t feel uncertainty. Humans do, and that hesitation is often what prevents irreversible mistakes.

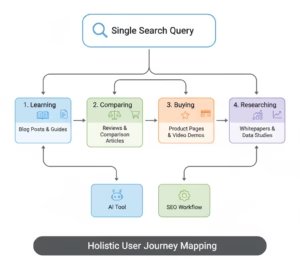

Context Blindness

AI agents do not understand your business.

They don’t know:

- Which pages drive indirect revenue

- Which content supports funnel entry points

- Which URLs exist for trust, not traffic

- Which keywords matter commercially versus informationally

Without this context, an AI agent evaluates SEO purely as a mechanical system. That’s not how search works in practice. SEO lives at the intersection of user intent, monetization, branding, and long-term positioning.

I’ve seen agents flag pages for consolidation that were intentionally separated to target different audience segments. The data looked redundant. The strategy was not.

This is the core limitation of automation. SEO decisions are rarely just technical. When context is missing, “optimization” becomes guesswork.

Over-Automation Damage

The most expensive SEO mistakes I’ve seen in the last two years came from over-automation, not neglect.

AI agents can generate hundreds of recommendations at once. If those recommendations are implemented programmatically or without review, the damage scales instantly.

Examples include:

- Internal links removed at scale, weakening authority flow

- Canonicals changed incorrectly across templates

- Parameter URLs blocked without understanding crawl dependencies

The danger isn’t one bad recommendation. It’s many small bad decisions applied consistently. Humans make fewer mistakes, but they also make fewer changes. AI agents reverse that ratio.

Dependency and Skill Atrophy

This risk is rarely discussed, but it’s very real.

When teams rely too heavily on AI agents, they stop understanding why things work. SEO knowledge becomes outsourced to systems. Over time, this leads to:

- Blind trust in outputs

- Inability to challenge incorrect findings

- Slower response to algorithm changes

When the agent fails, the team has no intuition left.

This is exactly why I often reference AI vs Human SEO Where Automation Actually Works. Automation should sharpen human decision-making, not replace it. Once teams lose fundamentals, they lose leverage.

Where AI Agents Work Well (And Where They Don’t)

AI agents are not universally good or bad. Their value depends entirely on the task.

Understanding this boundary is what separates sustainable SEO workflows from fragile ones.

Best-Fit Use Cases

AI agents excel in areas where structure, scale, and pattern detection matter more than judgment.

Technical SEO audits are a strong fit because they involve repeatable checks. Status codes, redirect chains, broken links, and crawl anomalies can be identified far faster by machines than humans.

Crawl budget analysis is another area where agents shine. They can surface which URLs are crawled frequently but deliver no value, helping you see inefficiencies that would take weeks to uncover manually.

Internal linking analysis benefits from AI because link graphs are complex. Agents can detect imbalance, orphaned pages, and excessive links to low-priority URLs.

Index bloat detection is especially well-suited for AI agents. Slow-growing bloat often goes unnoticed by humans, but agents catch it early by correlating crawl data and index coverage trends.

These use cases align closely with deeper frameworks discussed in How to Fix Index Bloat and Crawl Budget Issues in WordPress Tech Blogs and Why Programmatic SEO Fails Without Proper Crawl Budget Control, where scale without control is the real enemy.

Poor-Fit Use Cases

Where AI agents struggle is where SEO becomes subjective.

Content quality evaluation is a weak area for automation. Agents can measure length, structure, and even readability, but they cannot judge usefulness, originality, or trustworthiness with any reliability.

E-E-A-T judgment is another poor fit. Experience, expertise, and credibility are contextual and human. An AI agent can flag missing author bios. It cannot assess whether content genuinely demonstrates authority.

Strategic prioritization is perhaps the worst use case. Deciding what to fix first depends on risk tolerance, resources, timelines, and business goals. AI agents lack all of these inputs.

Step-by-Step: How to Safely Use AI Agents in SEO Audits

Using AI agents safely is not about limiting them. It’s about placing them correctly in the workflow.

Step 1: Define Audit Scope Clearly

Before running any agent, define boundaries.

Be explicit about:

- What the agent is allowed to analyze

- What it is not allowed to recommend

- What it is never allowed to change

This prevents scope creep. An agent designed to analyze crawl data should not be suggesting content deletions or monetization changes.

Step 2: Use AI Agents as Analysts, Not Executors

The safest implementations I’ve seen treat AI agents as read-only analysts.

They observe. They flag. They suggest.

They do not execute changes automatically.

Read-only permissions protect you from cascading failures. Action permissions should only exist where rollback is easy and impact is limited.

Step 3: Validate Findings Manually

Validation is not optional.

Effective teams:

- Sample flagged issues instead of trusting totals

- Cross-check findings against known benchmarks

- Compare agent output with historical performance

This step filters noise from signal. It also helps humans learn from the agent instead of deferring to it.

Step 4: Layer Human Strategy on Top

The agent gives you data. Strategy turns data into decisions.

This is where humans decide:

- Which issues matter now

- Which fixes align with goals

- Which recommendations to ignore entirely

AI agents do not own outcomes. Humans do.

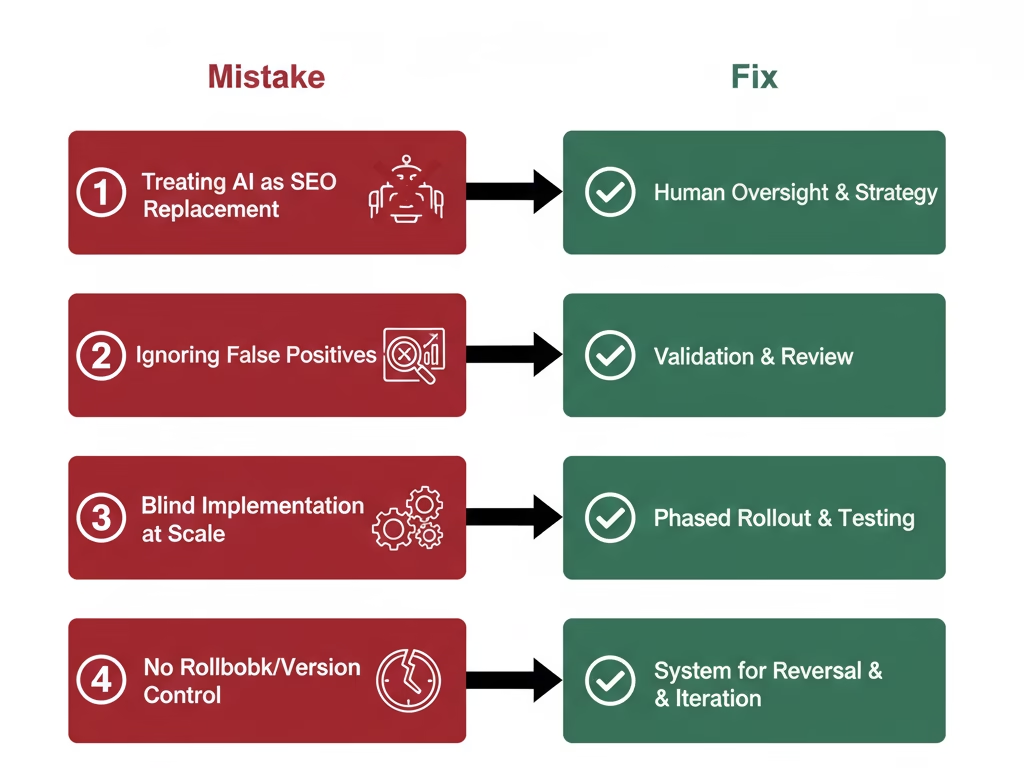

Common Mistakes When Adopting AI Agents for SEO

Most failures with AI agents don’t come from bad tools. They come from bad assumptions. Teams assume intelligence where there is automation, and judgment where there is pattern matching.

Treating AI Agents as SEO Replacements

This is the fastest way to damage a site.

AI agents do not replace SEO thinking. They replace repetitive analysis. When teams start deferring decisions to agents, they lose the ability to explain why something should or should not be changed. That gap eventually shows up as ranking instability.

If you cannot justify an SEO change without referencing the agent’s output, you shouldn’t make that change.

Ignoring False Positives

AI agents are optimized for detection, not precision.

They will flag:

- Edge cases

- Temporary anomalies

- Issues that look statistically relevant but are strategically irrelevant

Teams that treat every alert as urgent end up chasing noise. This burns time, creates unnecessary changes, and increases the risk of self-inflicted SEO damage.

False positives are not bugs. They are a byproduct of scale. Humans exist in the workflow to filter them.

Blind Implementation at Scale

The most dangerous phrase in AI-driven SEO is “apply globally.”

SEO changes compound. A small mistake applied to thousands of URLs becomes a structural problem. AI agents surface patterns quickly, but they cannot predict second-order effects.

Any recommendation that affects templates, internal links, indexing rules, or crawl behavior must be tested in isolation first. No exceptions.

No Rollback or Version Control

This is a quiet operational failure.

Teams make AI-driven changes without tracking:

- What was changed

- When it was changed

- Why it was changed

When rankings drop weeks later, no one can trace the cause. SEO becomes guesswork. Version control and change logs are not optional in AI-assisted workflows. They are insurance.

FAQs

Are AI agents better than traditional SEO audit tools?

They’re better at correlation, not judgment. Traditional tools show isolated issues. AI agents connect signals across systems. That makes them more powerful, but also more dangerous if misunderstood.

Can AI agents replace SEO consultants or in-house teams?

No. They can replace repetitive analysis tasks, not strategic thinking. Any claim otherwise is marketing, not reality.

How accurate are AI agent recommendations?

Accuracy varies by task. Technical detection is often strong. Strategic recommendations are unreliable without human context. Accuracy improves dramatically when humans validate and interpret output.

Are AI agents safe for large websites?

They can be, but only with strict boundaries. Large sites amplify both benefits and mistakes. Read-only analysis and phased testing are non-negotiable at scale.

Do AI agents help recover from Google penalties?

They can surface technical and structural issues faster, but penalties are rarely solved mechanically. Recovery requires intent alignment, quality improvement, and trust rebuilding, areas where AI agents have limited insight.

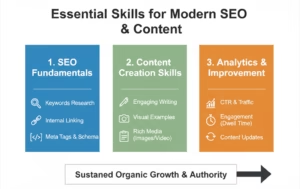

What skills do SEOs need when working with AI agents?

Interpretation, prioritization, and restraint. The more automation you use, the more valuable human judgment becomes.

Conclusion

AI agents for SEO audits are neither a shortcut nor a threat. They are leverage.

Used correctly, they help SEOs see patterns faster, audit larger sites more frequently, and catch issues before they escalate. Used carelessly, they accelerate bad decisions and hide accountability behind automation.

The teams that win with AI agents are not the ones who automate the most. They are the ones who understand where automation stops.

Let AI agents observe, analyze, and surface signals. Let humans decide what matters, what changes, and what stays untouched. That balance is not optional. It’s the difference between scalable SEO and fragile SEO.